Українською читайте тут.

Global threats are not limited to wars, cyberattacks, or disinformation campaigns. The digital space has also been occupied by less visible—yet no less aggressive—actors whose target is human trust and whose method is manipulation.

This is precisely the story of the so-called “Church of Almighty God”—a Chinese sect also known as “Eastern Lightning.” It emerged in China in the late 20th century and built its doctrine on the claim that Christ has allegedly already returned in the body of a Chinese woman. In the mid-1990s, Chinese authorities banned the sect, but the network itself did not disappear—it expanded beyond China and is now recruiting people in various countries, including Ukraine.

Representatives of the “CAG” do not come to your door in black cloaks with brochures and a doorbell ring. They enter through social media feeds—especially Facebook—in the form of touching images, AI-generated videos with religious icons, posts about soldiers “whom no one congratulated,” and puppies “looking for a home.” All of this works as emotional bait: people like and share these posts, CAG pages gain reach, social media algorithms push them to new audiences, and eventually, users are drawn into closed chats.

At this stage, it appears that CAG’s primary goal is the indoctrination of followers. At the same time, there have previously been allegations of intimidation and extortion for “salvation.” In any case, a structure that deceives people into joining closed communities, systematically “processes” them, and subordinates them to its doctrine creates a controlled circle of loyal followers and an environment where people are much easier to manipulate.

From “amen” in the comments to a closed chat: recruitment in Ukraine

In Ukraine, the “Church of Almighty God” disguises itself on social media as something familiar and “local.” On Facebook, these are pages, groups, and accounts with names that evoke religious or emotional sentiments, such as “Prayer,” “God’s Love,” “Orthodox Family,” “Happy Birthday Greetings,” or “Cards for Friends.” On the surface, they look like ordinary religious or greeting communities. But on closer inspection, the façade begins to crack: Ukrainian-language content is often administered from abroad, while the network reveals itself through language mistakes, calques, and odd mismatches between text and images.

Despite these inconsistencies, the CAG recruitment scheme works. It is designed not for careful reading, but for quick emotional reactions. People feel that not liking, commenting, or sharing means failing to show support. This is where the manipulation strikes a very vulnerable point. Not everyone can donate to the army. But when someone sees a post about a soldier supposedly asking for just a few kind words, they may sincerely want to offer support in that way. It is precisely this human need to care that CAG turns into a tool to increase its reach and recruit new followers.

The scheme is simple—and that is exactly why it is dangerous. It works in three steps:

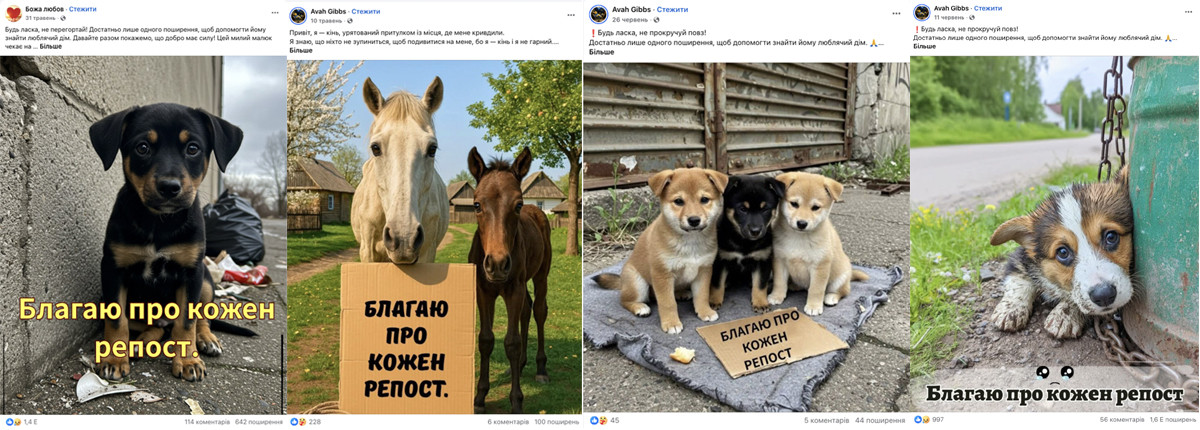

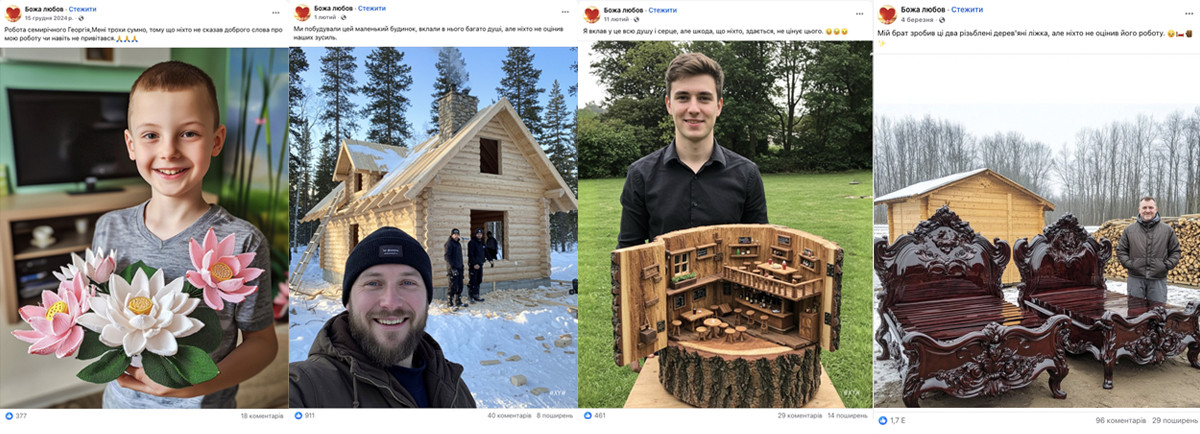

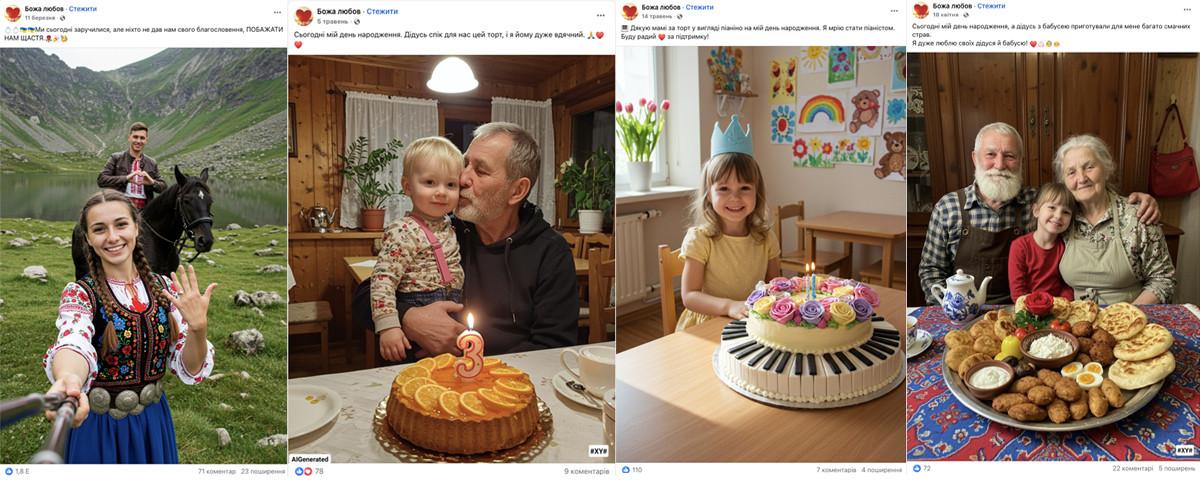

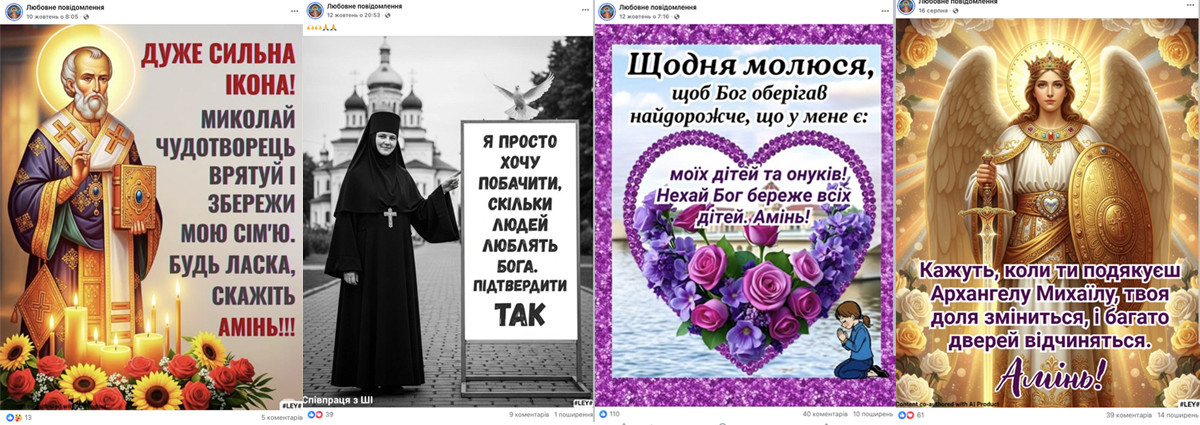

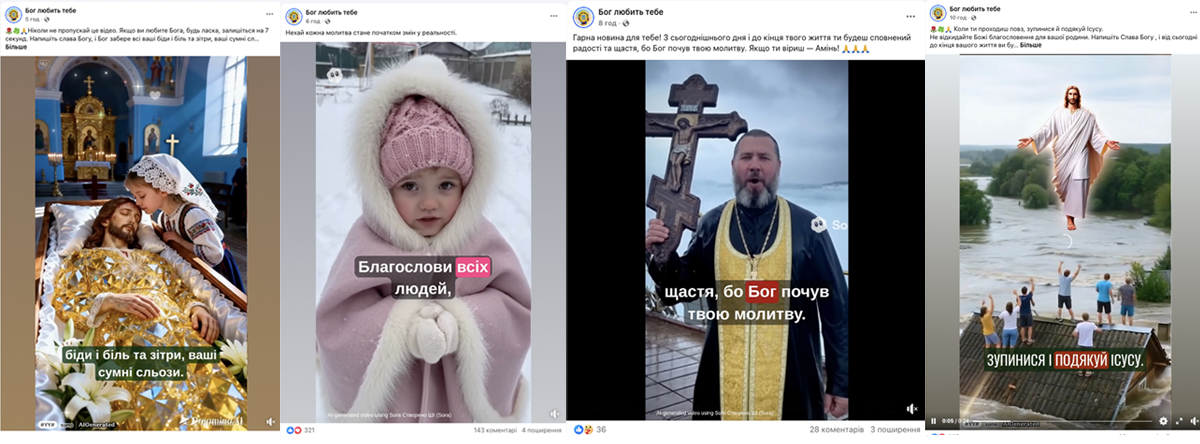

Step 1. Emotional clickbait. CAG publishes AI-generated content on patriotic, everyday, or religious themes to collect likes, comments, and shares. The goal is straightforward: to increase reach and lay the groundwork for the next stage of manipulation. The most common formats include:

Images of Ukrainian soldiers with “prosthetics,” “on the front line,” set against scenic backdrops, with captions like “He deserves at least one ‘thank you,’” “Give them love,” “Leave a heart and I will protect you,” “I wonder how many people will congratulate me and wish me happiness,” etc.

Images of animals (mostly dogs) “looking for a home,” with pleas to repost or captions like “let us pray for animals who suffer too.”

Images of people (often children) with captions such as “I made this myself, but no one appreciated it.”

Images of people whom “no one congratulated” on special occasions (weddings, birthdays, the birth of a child, etc.).

Images of religious symbols, believers, and saints.

The latest trend is AI-generated videos featuring icons, saints, priests, and children who appear to walk, “recite prayers,” and urge viewers to write “amen.”

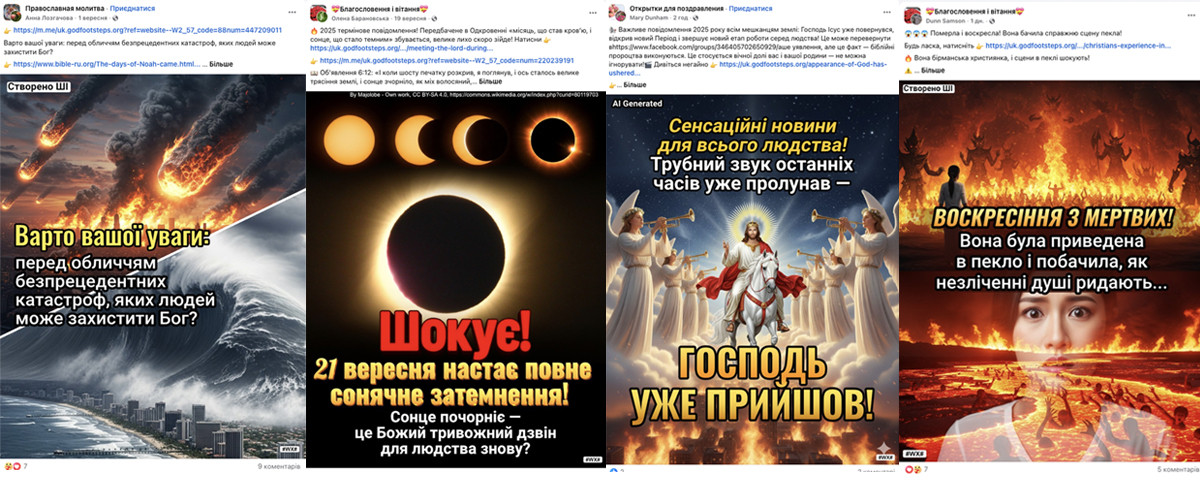

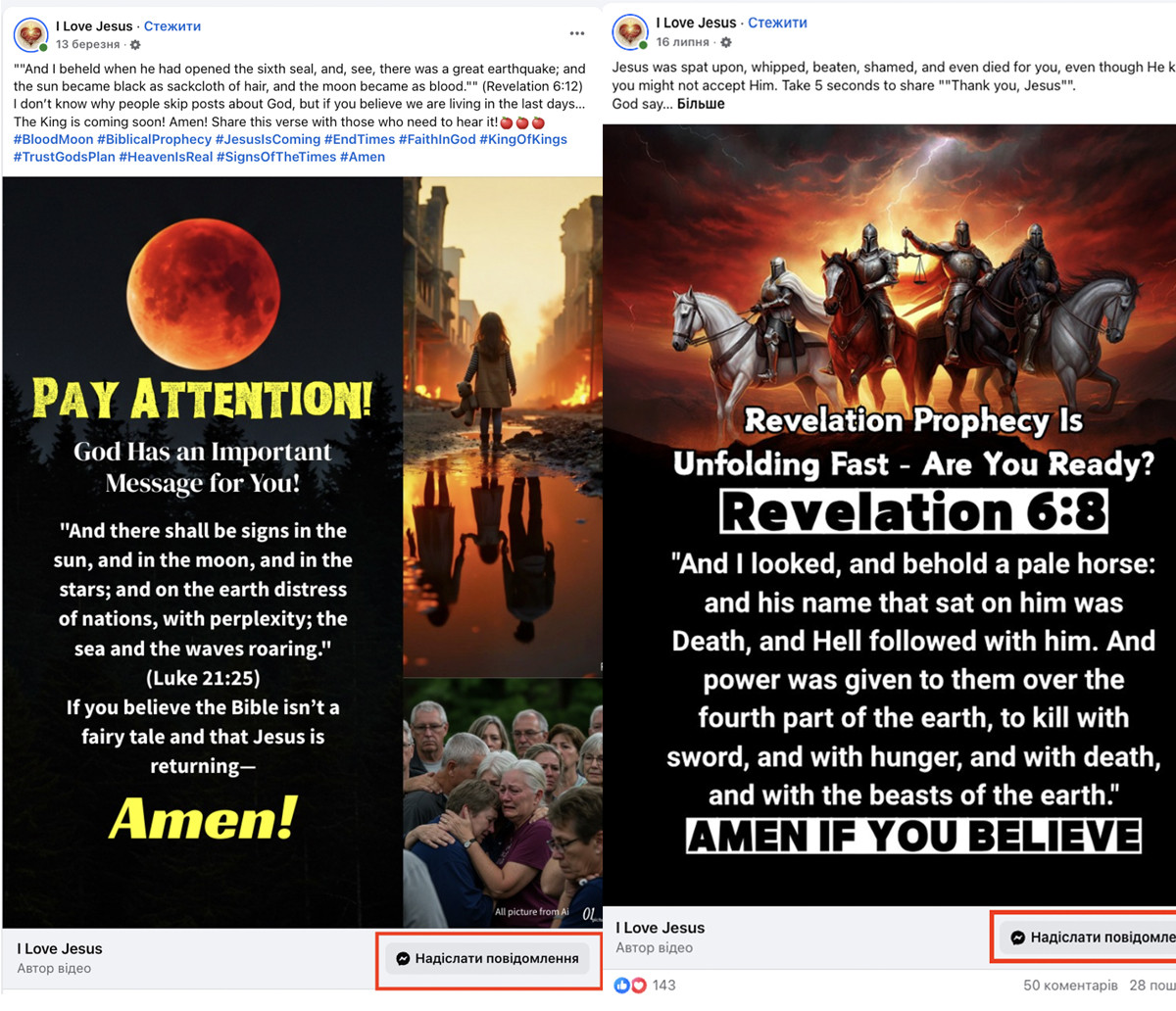

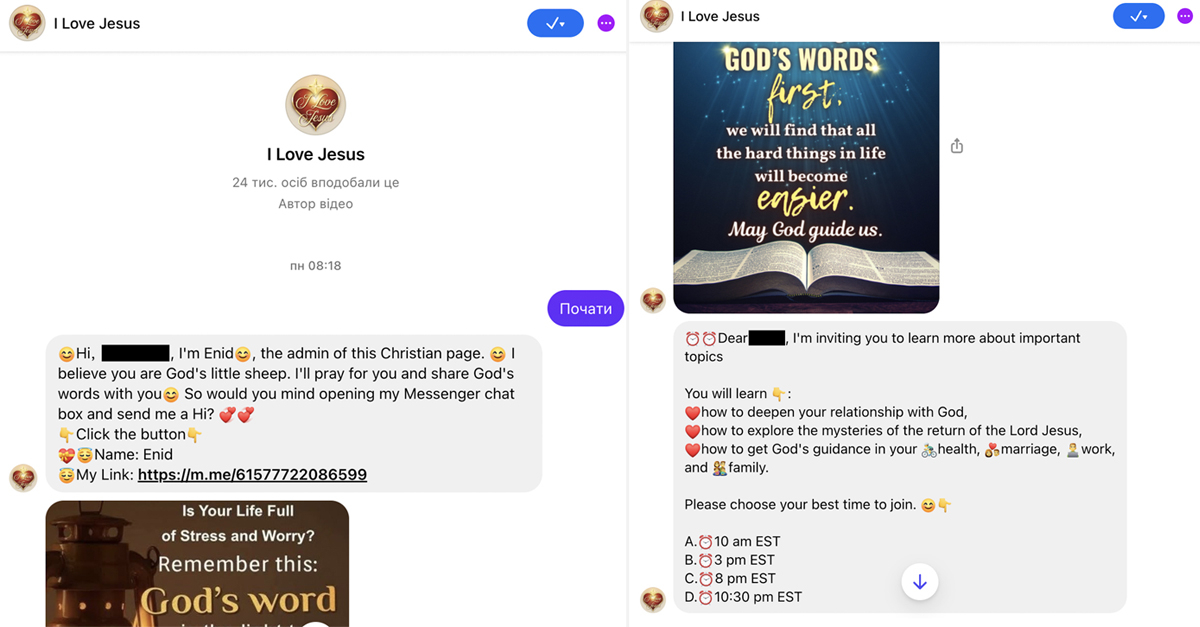

Step 2. Apocalyptic fearmongering with links to closed messaging groups. Once a clickbait post has gained traction, it may be edited—or new apocalyptic posts begin to appear alongside it: "The end of the world is near" and "To survive, learn the truth.” Users are then nudged to move to WhatsApp or Facebook Messenger—supposedly for prayer, explanations, or a “special seminar.”

Step 3. Ideological “processing” in closed WhatsApp or Facebook Messenger groups. A person is added to a “sermon” group where, alongside a few newcomers, there is already a team of administrators and “theologians” from the Chinese sect. What follows is an “8-day intensive,” daily calls, reminders, attendance monitoring, personal messages, and a gradual shift from an Orthodox façade to the core doctrine of the “CAG.” At this stage, the goal is no longer to attract interest but to retain, condition, and subordinate.

Let’s call it what it is: when a community systematically manipulates emotions, builds an audience through deception, and draws people into closed chats, this is no longer a “mission” or “religious service.” It is a recruitment technology.

“Eastern Lightning” is rumbling in the West as well

Ukraine is far from the only country where the “Church of Almighty God” has expanded its activities. Today, it is a global organization that has long moved beyond China and operates in dozens of countries—from Europe to Latin America and Asia.

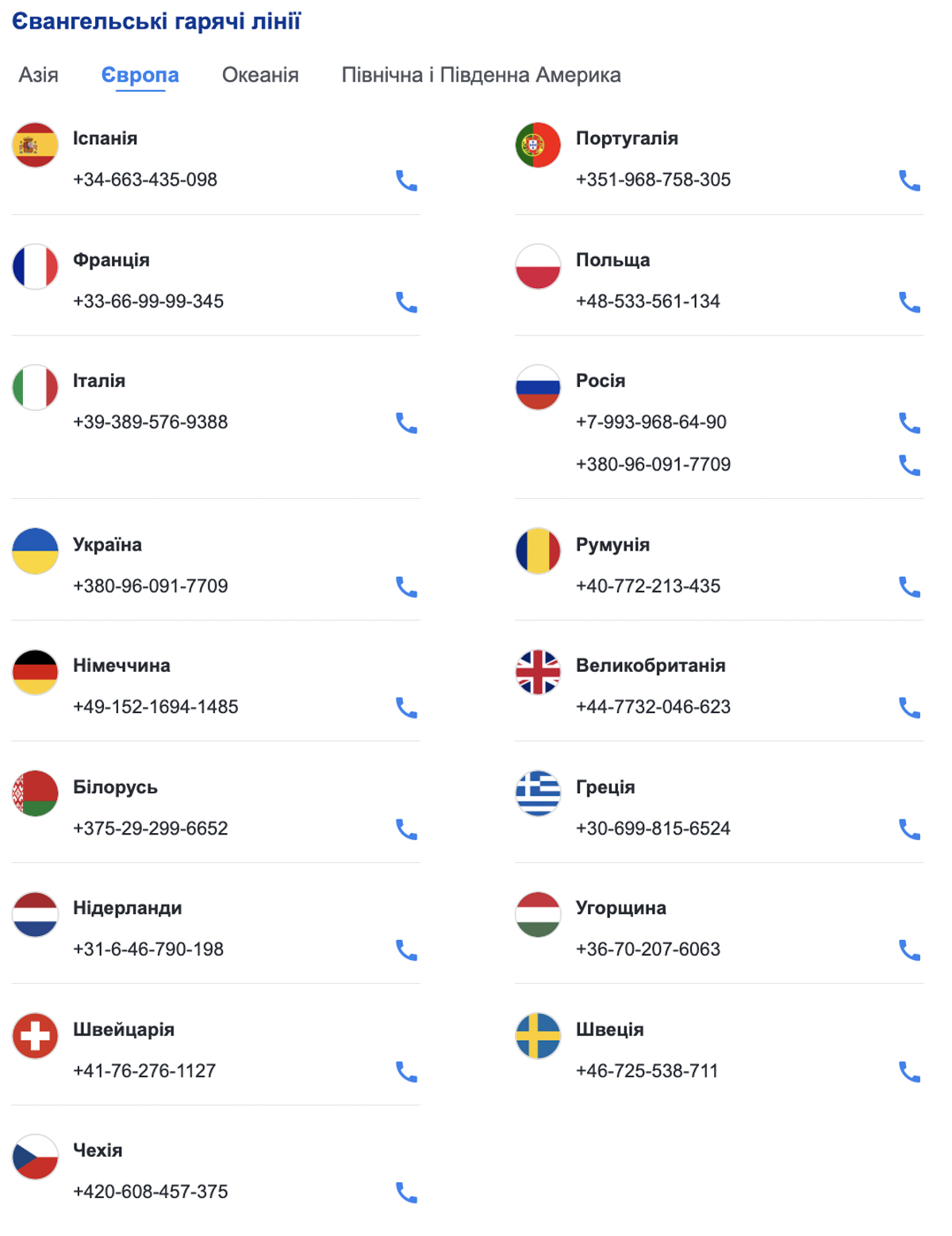

As of mid-March 2026, CAG has launched “gospel hotlines” in 42 countries worldwide, including 17 in Europe.

“CAG” replicates the same recruitment сценарії everywhere, including in Ukraine. The differences lie only in language and local “decor”—the underlying mechanics remain the same.

The scheme is identical across countries:

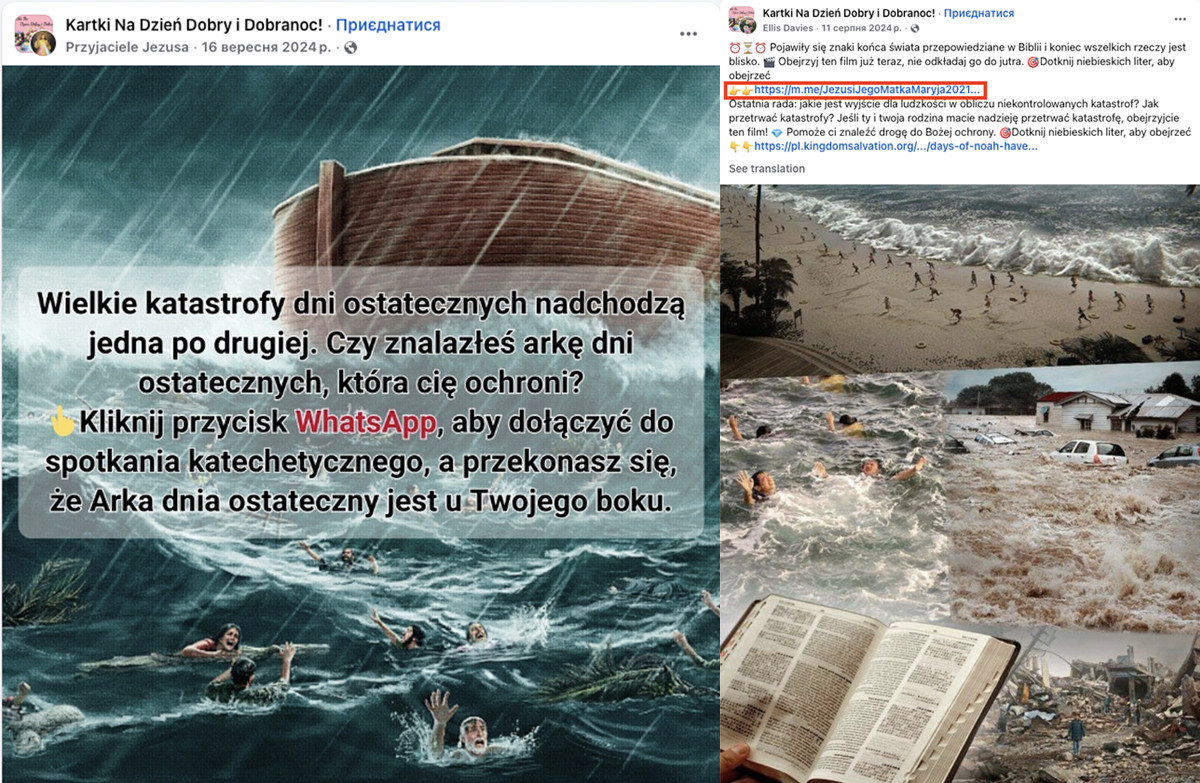

Mimicking the dominant religion. First, CAG representatives create pages, groups, and accounts that appear “local”: in Poland—“Catholic,” in Romania—“Orthodox,” and so on. Names, visuals, and language are tailored to feel familiar and trustworthy within each country.

Emotional clickbait. Next come emotionally charged stories, “miraculous healings,” “religious blessings,” and requests to write “amen” or “congratulations,” leave reactions, or share posts—all aimed at boosting engagement and expanding reach.

Local adaptation. The clickbait content is slightly adapted to local contexts. It is published in widely used languages, often with noticeable mistakes (as in Ukraine), likely because CAG leadership does not delegate content control to local followers. Emotional narratives are also adjusted: for example, while war-related themes are heavily exploited in Ukraine, in Poland, Catholic symbols and the figure of John Paul II are often used as trust markers.

Apocalyptic framing. “Prophecies” and “signs of the end times” begin to appear, increasing anxiety and prompting urgent action.

Migration to messengers. Posts include invitations to closed WhatsApp or Facebook Messenger groups, supposedly for “prayers,” “blessings,” or “special events.”

Closed sermons and doctrinal shift. Inside private chats, the content is gradually replaced with CAG teachings, building loyalty and leading participants toward regular “services” and donations.

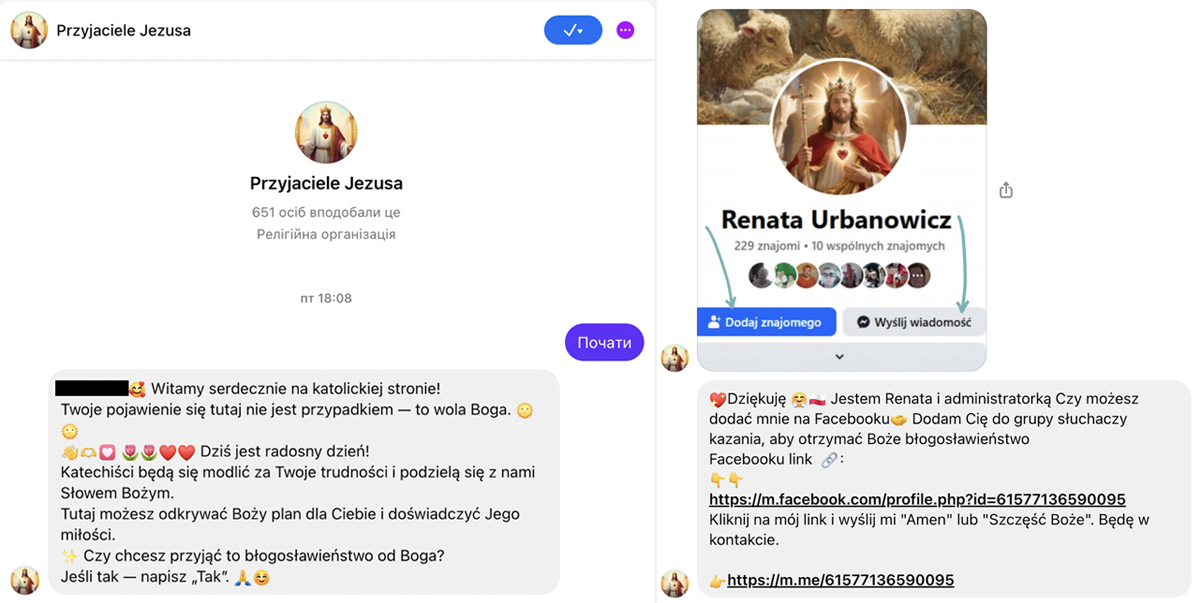

Example of recruiting “followers” to CAG in Poland

Step 1. Communities linked to CAG publish clickbait content.

Step 2. They publish apocalyptic posts with links to a messenger.

Step 3. The so-called “CAG doctors of theology” brainwash users through the messenger.

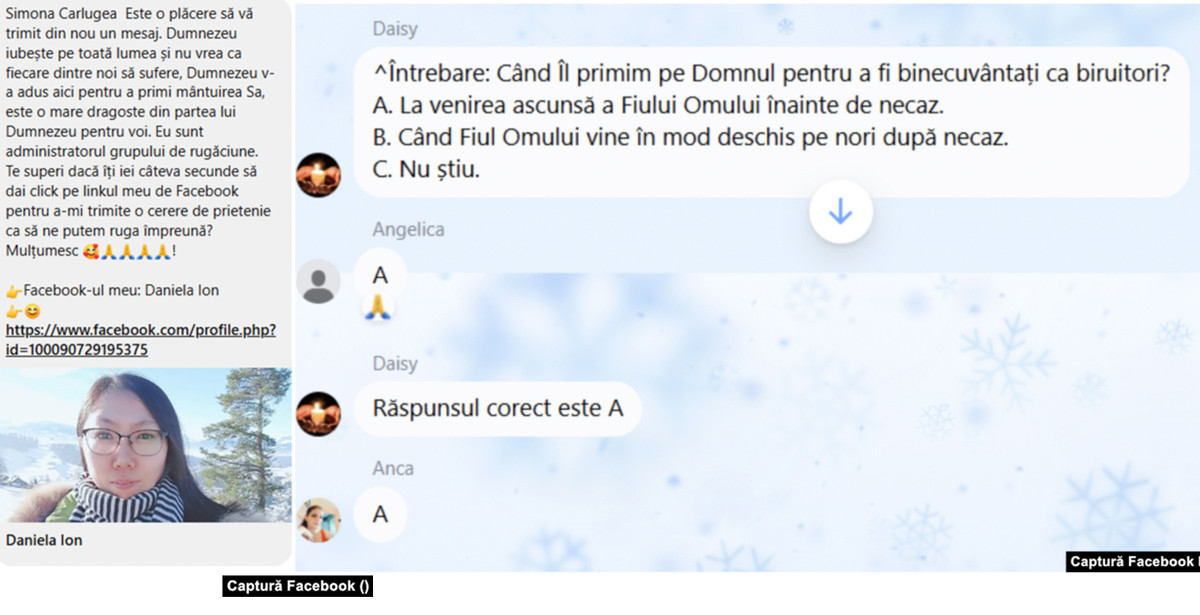

Example of recruiting “followers” to CAG in Romania

Step 1. Communities linked to CAG publish clickbait content.

Radio Free Europe/Radio Liberty investigated CAG’s activity in the Romanian segment of Facebook, which can be accessed via the link.

Step 2. They publish apocalyptic posts with links to a messenger.

Step 3. The so-called “CAG doctors of theology” brainwash users through the messenger.

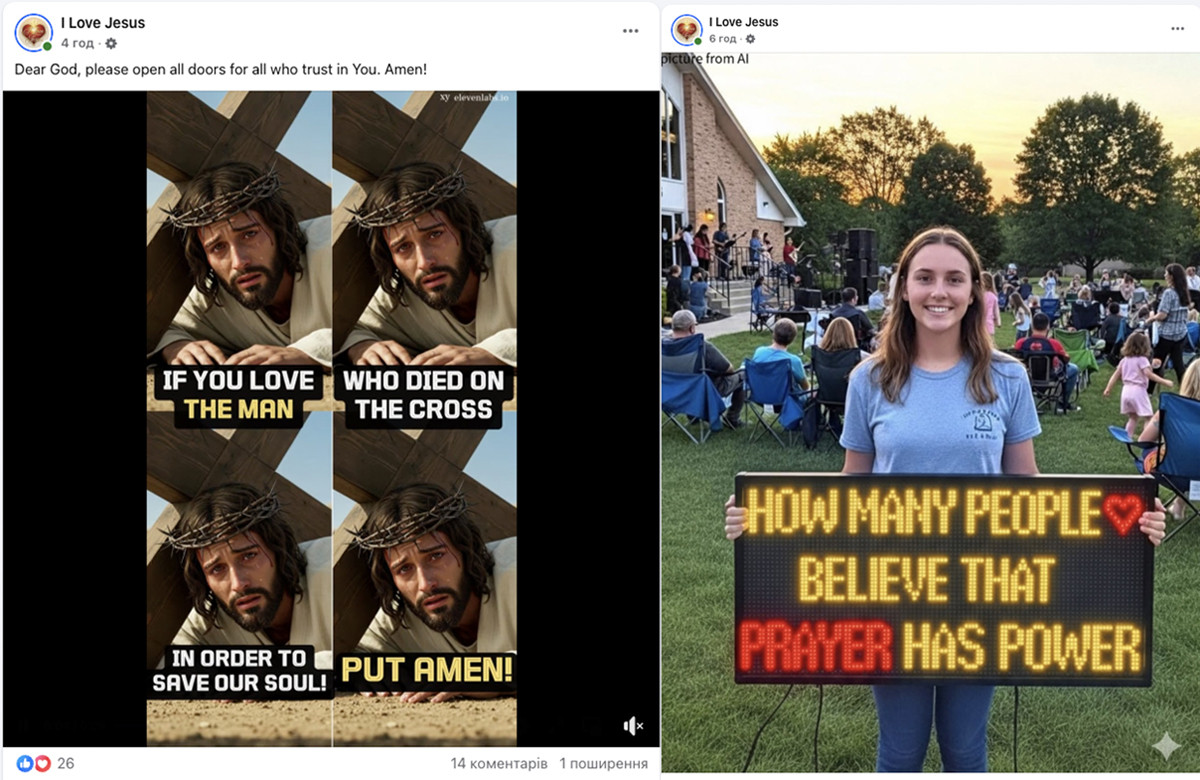

Example of recruiting “followers” to CAG in the United States

Step 1. Communities linked to CAG publish clickbait content.

Step 2. They publish apocalyptic posts with links to a messenger.

Step 3. The so-called “CAG doctors of theology” brainwash users through the messenger.

Why this scheme works across countries

Social engineering through familiar imagery. Social engineering is often discussed in the context of phishing, but it also plays a key role in the activity of clickbait pages. In the case of CAG, it “adapts” to the dominant religious identity: in Catholic countries—it poses as Catholic; in Orthodox countries—as Orthodox. Moreover, beyond general religious motifs, it also exploits locally relevant themes (for example, the war in Ukraine).

Algorithmic exploitation of platforms. Emotional posts generate user reactions, and platform algorithms then amplify them further.

Personalized “processing” in messengers. Closed chats are not subject to active external moderation and allow for systematic, personalized indoctrination—from general Christian messages to the specific doctrine of CAG.

Five tips to avoid falling for manipulators: folk wisdom for the digital age

To avoid feeding manipulative online schemes with your likes and shares, keep a few simple rules in mind—something like folk wisdom for the digital age:

- Not everything that shines is sacred. Especially on Facebook. A flashy post with talking icons or saints is not necessarily honest or genuine.

- Rush to like or comment—and you may amuse others… and please CAG. Every like is “fuel” for algorithms and a reward for manipulators. When you click, you help boost the popularity of the content and the page behind it. Don’t rush to engage with emotionally charged posts—that’s exactly what manipulators rely on.

- Measure seven times, share once. Check the pages you follow. Go to the page → “About” → “Page Transparency.” Look at when it was created, its previous names, and where its admins are located. If a page was created recently, has changed names multiple times, or is managed from outside Ukraine, this is a red flag. When viewing images or videos, look for signs of AI generation. Sometimes there is a label (“AI-generated”), usually in a corner. If not, examine the visuals: do they look overly polished? Are there strange or unreadable texts? Extra fingers? These may indicate AI content. Always consider the context. Check fact-checking projects such as NotaYenota, VoxCheck, and StopFake—they regularly report on new manipulation schemes and explain how to protect yourself. Only after thoroughly checking should you hit “share.”

- A repost is like a sparrow—once it flies out, you can’t catch it. When you click “Share,” the post instantly appears in your friends’ feeds, triggering a chain of comments, likes, and further shares. Even if you delete it later, the algorithms have already recorded the interaction.

- A tree stands on its roots, a person on family, and a social media user on critical thinking. If a post plays on your emotions or personal concerns, that’s a reason to pause. Before engaging with any content—especially emotional content—ask yourself two questions: who is posting this, and why? Only this kind of critical, balanced approach can help navigate the constant flow of manipulation in the digital space.